Segments which are overlay networks require N-VDS which is a virtual switch specific to NSX-T.

Virtualized workloads VMs are connected to segments hosted on N-VDS of compute hosts. The compute guest VMs will be attached to N-VDS of compute host.

Transport Nodes in NSX-T run instance of N-VDS

NSX-T has two types of Transport Nodes:

1. Edge Transport Node

Available in two form factors – VM and Bare Metal, these are required for services like routing, VPN, load balancing, connectivity with physical network, edge firewall, NAT. They represent a pool of capacity and will be grouped by Edge Node Cluster.

2. Hypervisor Transport Node

These are hosts which are NSX configured.

When hypervisor transport node is created in NSX-T, effectively N-VDS is created on the host. This N-VDS will have dual uplinks for availability purpose.

While configuring NSX on hosts, you specify settings like Transport Zones and N-VDS settings which include uplink profile, TEP IP addressing, uplink information.

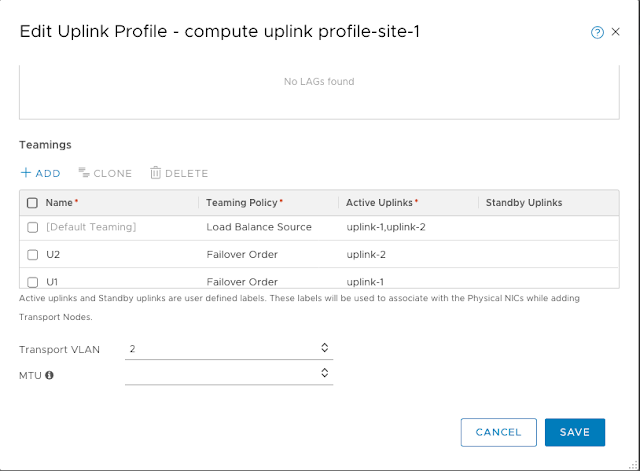

Uplink profile has teaming policy, active and standby uplink information, VLAN for TEP and MTU information.

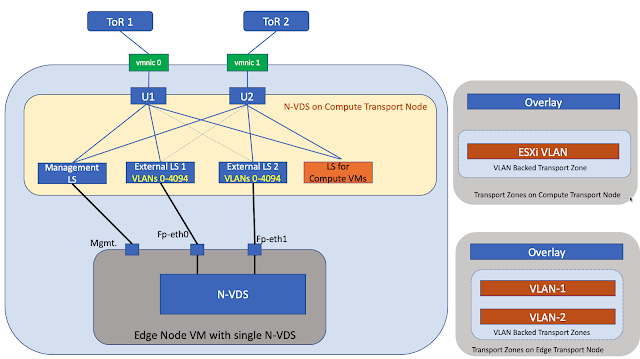

In the above topology, Edge Node VM uses N-VDS of compute transport node for connectivity with upstream physical network.

fp-eth0 interface of Edge Node VM is uplinked to Trunk Segment – External LS 1

Likewise fp-eth1 interface of Edge Node VM is uplinked to Trunk Segment – External LS 2

Management vnic of Edge Node VM is connected to Management segment created on host – Management LS

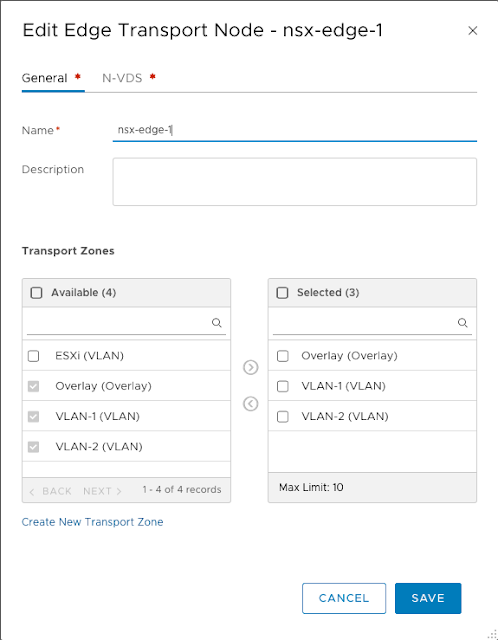

To the right of above diagram are the transport zones associated with compute transport node and the transport zones associated with edge transport node.

As you see, Overlay Transport Zone is available on both – compute transport node and edge transport node.

ESXi is VLAN backed Transport Zone available only on compute transport node.

VLAN-1 and VLAN-2 are VLAN backed transport zones which are available only on the edge transport node.

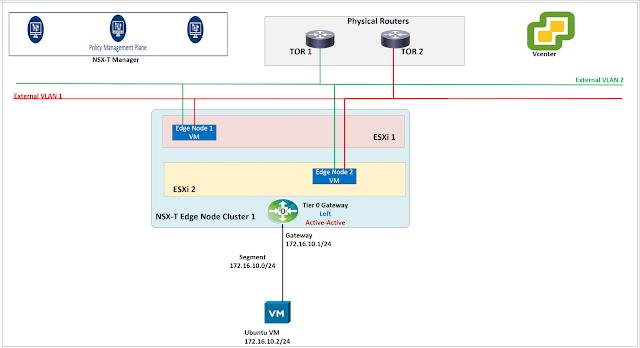

The above is a single tier topology which uses only Tier 0 Gateway.

It is recommended to use the multi tier topology with Tier 1 Gateway because it inherently supports multi tenancy.

A tenant in NSX-T has a specific Tier 1 Gateway and hence Tier 1 Gateways are also called as tenant gateways.

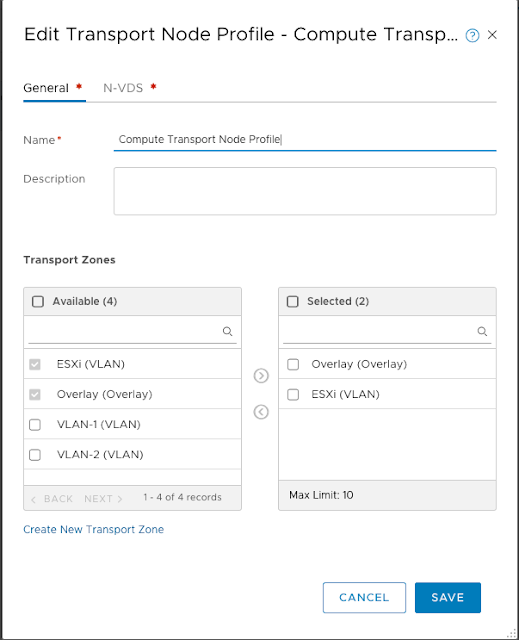

Transport Zones are created as above:

– ESXi and Overlay transport zones will be used on Compute Transport Nodes

– Overlay, VLAN-1 and VLAN-2 transport zones will be used on Edge Transport Nodes.

The uplink profile above shows that Transport VLAN ID 2 has been used for TEP interface on compute host.

TEP interfaces are used for encapsulation and de encapsulation of Geneve traffic.

Geneve is the protocol used in NSX-T for building tunnels between Tunnel End Points which are present on Edge Transport Nodes and also on Compute Transport Nodes.

In addition to the default teaming policy, two teaming policies U1 and U2 are created. These new teaming policies are failover based with single uplink.

These teaming policies can be effectively used to pin traffic of a specific VLAN backed segment.

We will first be creating a compute transport node profile and then use this profile for configuring NSX on compute hosts.

Overlay and ESXi transport zones are selected in the Compute Transport Node Profile.

|

| N-VDS settings of Compute Transport Nodes |

The above settings are settings on N-VDS of a Compute Transport Node Profile.

vmnic4 and vmnic5 on the hosts will be used for N-VDS.

IP Pool has been created for assigning IP addresses to TEP interfaces on compute transport nodes.

Uplink profile for compute transport nodes is selected.

Using the compute transport node profile created earlier, the compute hosts have been configured as compute transport nodes.

Effectively N-VDS is installed on the compute hosts along with appropriate transport zones – ESXi and Overlay.

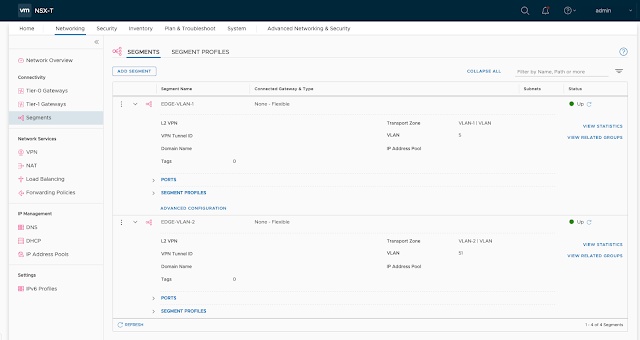

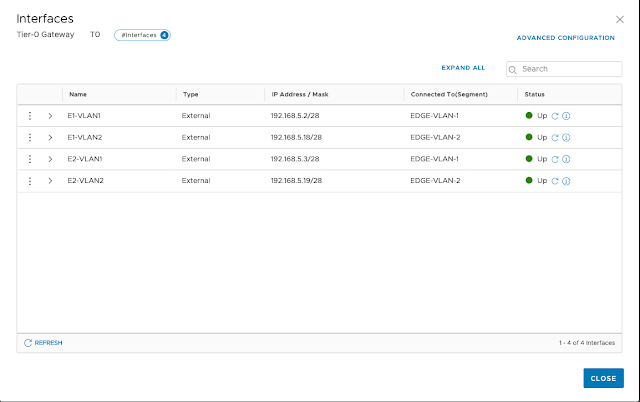

The above segments are created for creating external interfaces on Tier 0 Gateway.

Segment EDGE-VLAN-1 uses a VLAN tag of 5 and segment EDGE-VLAN-2 uses VLAN tag of 51

When Edge uses N-VDS of compute host for uplink connectivity then two different subnets should be used for TEPs on compute and TEPs on edge.

Additional teaming policies have been created which will be used for pinning traffic on VLAN backed segment.

The above transport zones are used on Edge Transport Nodes.

Overlay transport zone for Geneve backed traffic

VLAN-1 transport zone which will be used for peering with upstream router 1

VLAN-2 transport zone which will be used for peering with upstream router 2

|

| N-VDS settings on Edge Transport Node |

Appropriate TEP pool for Edge has been selected.

Uplink profile related to Edge has been selected.

Notice that fp-eth0 and fp-eth1 on Edge Node VM are getting uplinked to Edge-Trunk-Uplink-1 and Edge-Trunk-Uplink-2 respectively which are VLAN backed segments on N-VDS of compute host.

After the Edge Node VMs are configured properly, we then need to configure Edge Node Cluster as shown below.

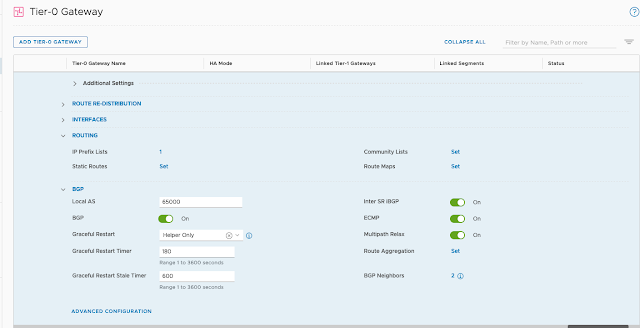

Tier 0 Gateway requires edge node cluster for peering with physical network.

Active-Active high availability mode has been used in this lab.

Configure BGP neighbors on Tier 0 Gateway.

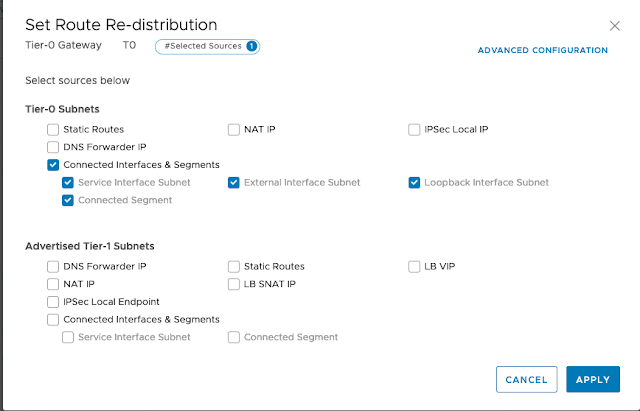

Configure route redistribution on Tier 0 Gateway.

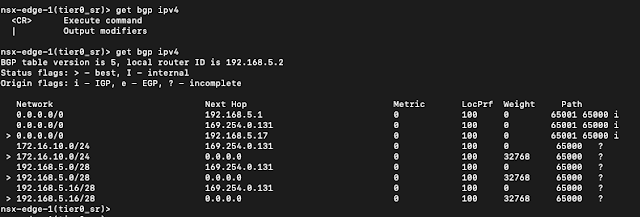

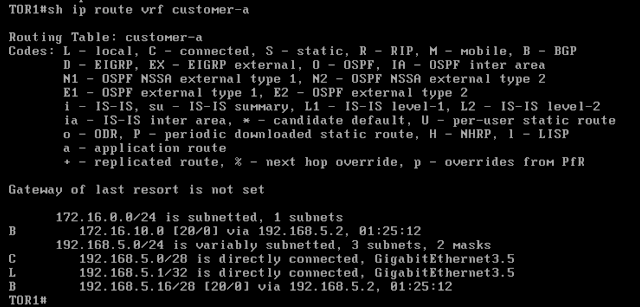

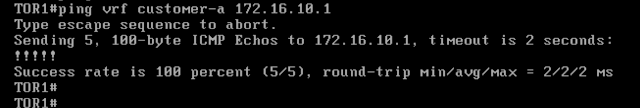

The below command output is from TOR1