Multiple VDS’ for Overlay on compute hosts

This use case is also referenced in NSX-T Reference Design Guide which mentions that starting with NSX 3.1, a host can have virtual switches part of different overlay transport zones and the TEPs on each virtual switch can be on different VLAN/IP subnets (still, all the TEPs for an individual switch must be part of the same subnet). When planning such configuration, it is important to remember that while hosts can be part of different overlay transport zones, edge nodes cannot.

NSX-T allows the creation of overlay networks which are independent of networks in physical/underlay network.

Along with the creation of overlay networks using NSX-T, micro segmentation/zero trust model of NSX is widely used to implement micro segmentation over NSX defined overlay networks as well as over traditional VLAN backed networks.

Architecturally NSX-T solution has components which sit in the management/control plane and those that are in data plane:

1. Management plane which has NSX T Managers.

They are responsible for management plane as well as control plane.

2. Data Plane

In the data plane, we have:

a. Compute hosts which are prepared as host transport nodes and which also host the workloads which are connected to overlay networks typically.

b. Edges which provide north-south connectivity with physical network.

And these can also be leveraged for services like load balancing, edge firewall, NAT, DHCP. Edges are used to create Tier 0 Gateways which sit in between physical network and Tier 1 Gateways.

Tier 1 Gateways are those gateways to which overlay networks are connected typically.

A typical deployment of NSX-T will have one N-VDS or one VDS with NSX (preferred option for vSphere & vcenter versions 7 & above) on the compute host which is capable of handling both overlay traffic as well as VLAN traffic.

Note: From vSphere & vcenter versions 7.0 & above, it is highly recommended to use VDS for NSX with NSX software version 3.0 and above.

This lab setup is based on older NSX & vsphere release and hence N-VDS construct is there. But for newer vsphere, vcenter and NSX releases, it is highly recommended to use VDS for NSX.

Here we explore how one can deploy multiple N-VDS’ or VDS with NSX on host to provide uplink connectivity from host to two different environments.

For example Data Center Zone and DMZ Zone.

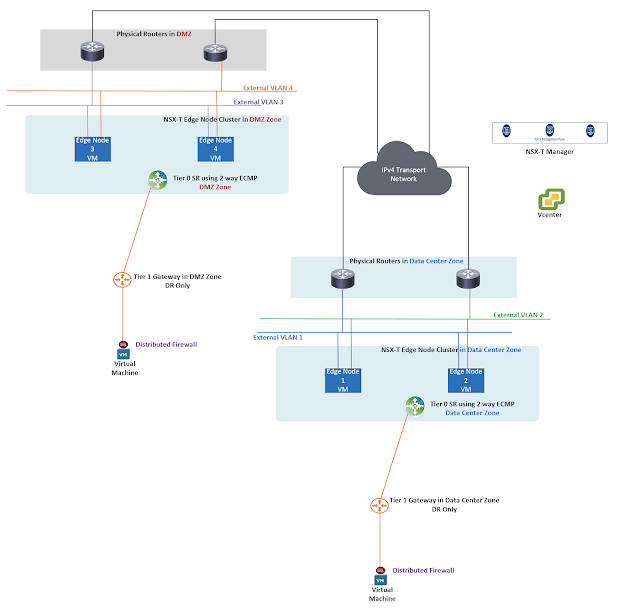

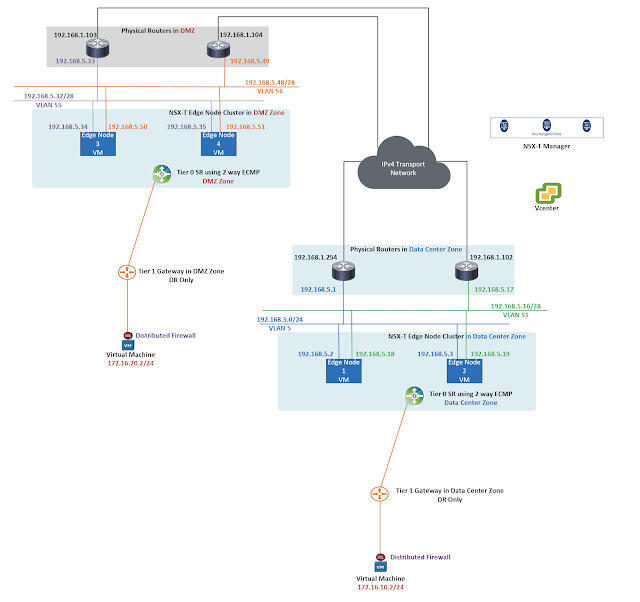

I have used below logical setup in this lab.

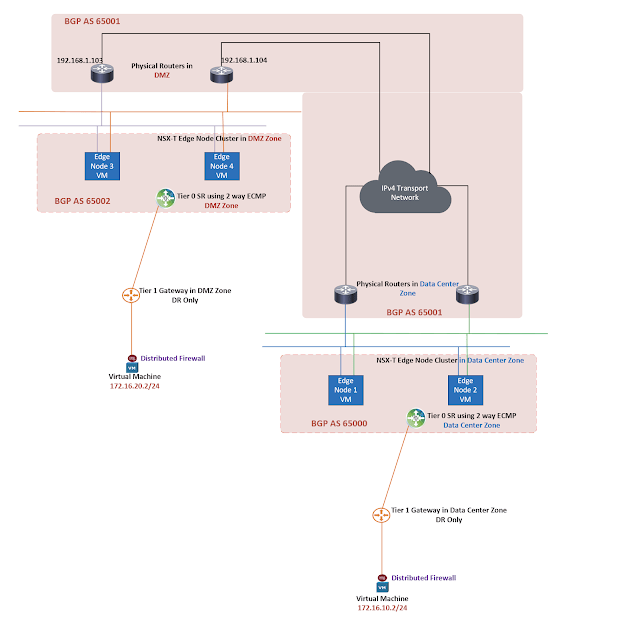

The edges in this setup have been used for Tier 0 Gateways.

There are two Tier 0 Gateways in this setup, one for Data Center Zone and the other for DMZ zone.

Edge clustering provides high availability at Tier 0 Gateway level as well as at Tier 1 Gateway level.

Availability mode at Tier 0 Gateway level can be Active-Active or Active-Standby if any stateful service is to be consumed by Tier 0 Gateway.

Availability mode at Tier 1 Gateway level is Active-Standby in case any service is to be used at that specific Tier 1 Gateway.

Note: With newer NSX release, it is also possible to have Active-Active HA mode on Tier 1 gateway for stateful services such as NAT, gateway firewall.

If service (NAT,edge firewall, load balancing, VPN) is not required on the Tier 1 Gateway then you should not associate the Tier 1 Gateway with edge cluster. This avoids potential hair pinning of traffic through edge.

Fabric Preparation

|

| N-VDS’ on compute host |

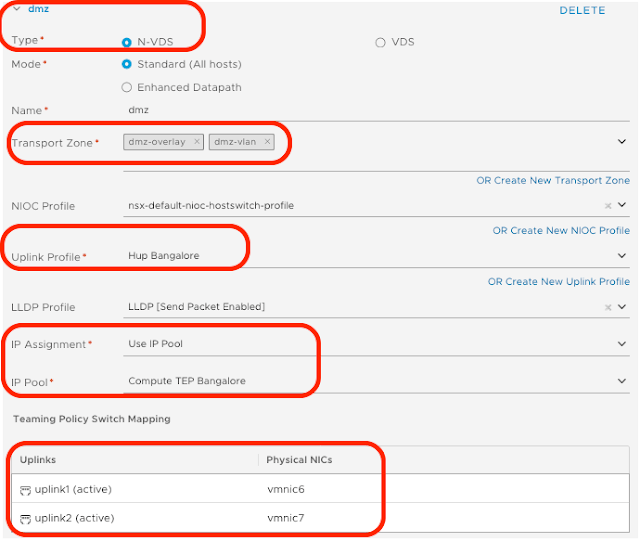

As shown in diagram above, there are two N-VDS’ installed on compute host once host is prepared for NSX.

Note: For newer software versions of NSX, vsphere and vcenter server; replace N-VDS on host with VDS.

Couple of things are required before the hosts can be prepared for NSX here:

a. NSX Manager should be installed.

b. Vcenter server should be added as compute manager to NSX-T Manager

c. Tunnel Endpoint TEP pools are to be defined which take care of TEP IP assignment on hosts and edges.

TEP interfaces on hosts/edges are responsible for encapsulation/de encapsulation of Geneve traffic. Geneve is the overlay protocol utilized in NSX T

d. Uplink profiles are to be created for edge and hosts respectively.

e. Compute Transport Node profile should be defined.

|

| Uplink profiles for edges and hosts |

Uplink profile contains TEP VLAN ID, active uplink names, teaming policy and MTU setting.

Overlay networks/segments are created using overlay transport zone.

VLAN backed networks/segments can be created using VLAN transport zone.

The above transport zones are used for this lab setup.

Trunk segments which are used for uplink connectivity of edge will utilize VLAN transport zone on host.

Next compute transport node profile will be created which will be mapped to the single cluster used in this setup.

Please note that in this lab setup, TEP pools used are the same on both the N-VDS’

Important note from reference design guide for version 3.2

TEPs on each virtual switch can be on different VLANs/IP subnets.

The compute transport node profile is then mapped to the cluster so that the cluster is prepared for NSX.

Here NSX software is pushed to the hosts, N-VDS’ defined are created on the hosts, TEP interfaces are created on the hosts.

|

| Data Center N-VDS and DMZ N-VDS on the compute host |

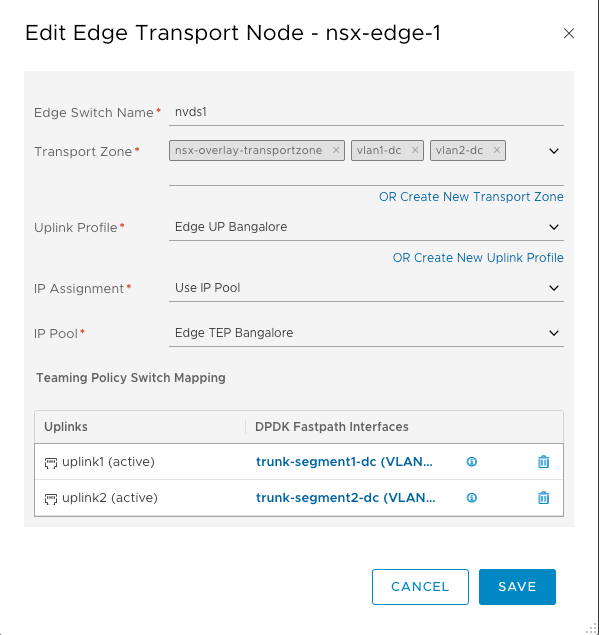

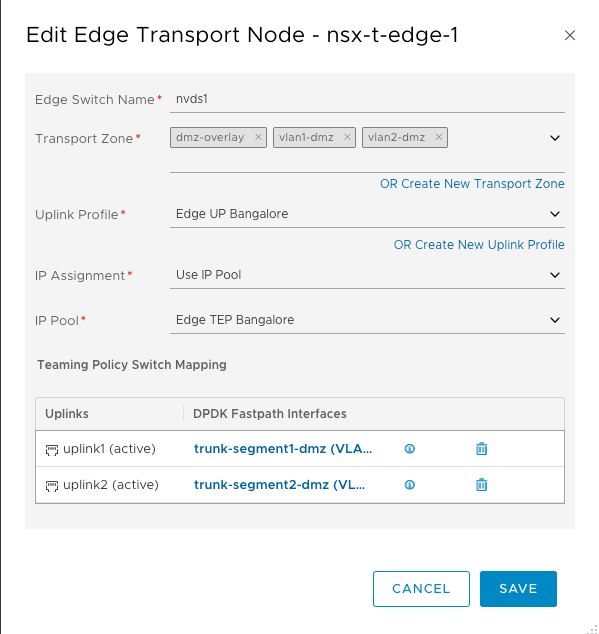

We now move onto edges.

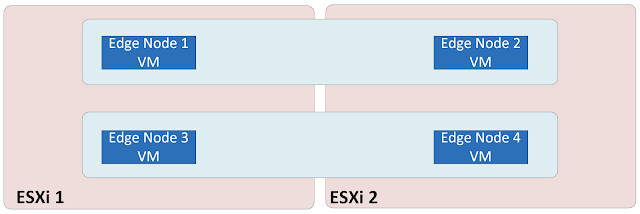

Edge placement in the lab is as below.

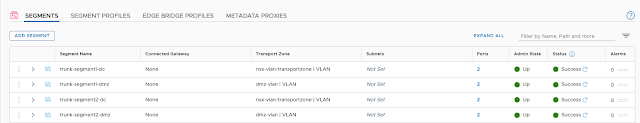

Appropriate VLAN backed segments/networks are created in NSX-T Manager for uplink connectivity of edges.

Note, appropriate transport zones are selected when VLAN backed trunk segments are created using host N-VDS

Next edges are deployed.

The edge also has N-VDS which handles VLAN traffic and overlay traffic.

VLAN traffic towards the physical network and overlay traffic to/from the compute hosts. Note that N-VDS is the only switch type configurable on NSX edge node.

The edge for Data Center Zone is connected to N-VDS allocated for Data Center Zone.

And the edge for DMZ Zone is connected to N-VDS allocated for DMZ Zone.

|

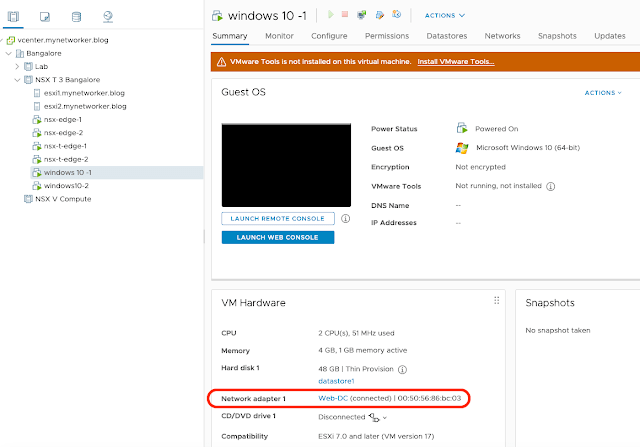

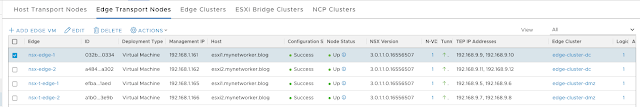

| Edges are configured for NSX |

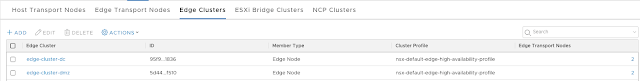

Four edges are deployed, two for Data Center Zone and two for DMZ Zone.

Edge Clusters are created appropriately.

Gateway Configuration and Routing

VLAN backed segments are created which are to be used while creating Layer 3 interfaces on Tier 0 Gateways.

|

| Segments for creating L3 interfaces on Tier 0 Gateways |

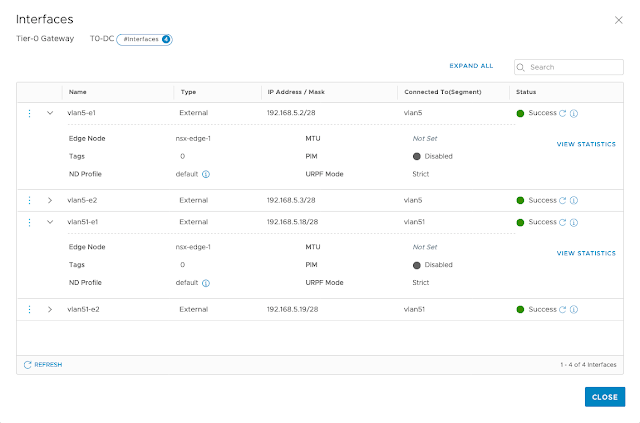

VLAN 5 and VLAN 51 are used on Tier 0 Gateway for Data Center Zone

VLAN 55 and VLAN 56 are used on Tier 0 Gateway for DMZ Zone.

Next Tier 0 Gateways will be defined and layer 3 interfaces will be created on the Tier 0 Gateways using IP addressing as shown in diagram above.

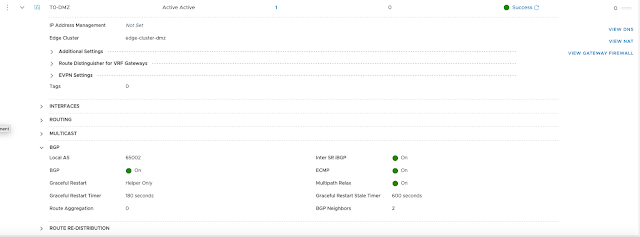

When the Tier 0 Gateway for Data Center Zone is created, edge cluster for Data Center Zone is selected and the availability mode on Tier 0 Gateway is Active-Active.

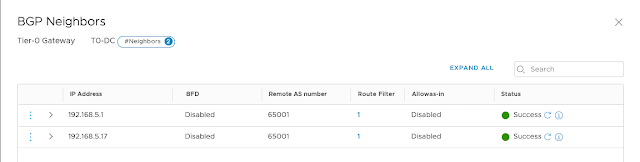

From routing perspective, upstream routers should advertise default route to e BGP peers which are the edges.

NSX routes are redistributed into BGP on NSX end.

Tier 1 Gateways advertise routes towards Tier 0 Gateway.

There is no routing protocol between Tier 0 Gateway and Tier 1 Gateway.

Please note that inter SR i BGP peering can be enabled when availability mode on Tier 0 Gateway is Active-Active, this is preferred.

Next create Tier 1 Gateways and connect to appropriate Tier 0 Gateway.

|

| Tier 1 Gateways are configured |

Routes on Tier 1 Gateways are advertised towards Tier 0 Gateway upstream.

Overlay segments are created for Data Center Zone and for DMZ Zone respectively.

These overlay networks are connected to corresponding Tier 1 Gateways.

Once the overlay networks are created, then workloads can be connected to these overlay networks from vcenter.

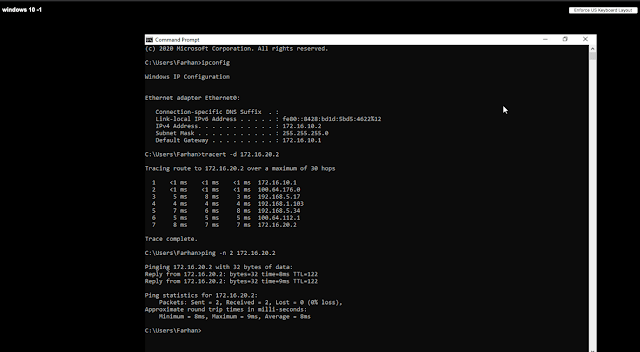

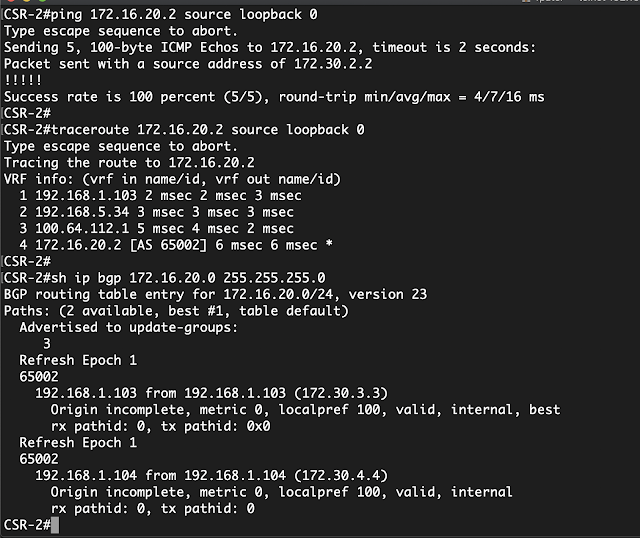

Validation

|

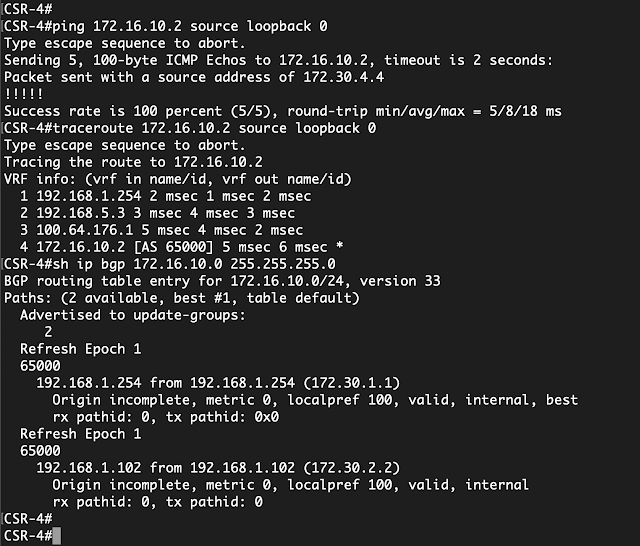

| Ping/Trace from physical router in DC Zone to VM in DMZ |

|

| Ping/trace from physical router in DMZ to VM in DC Zone |